So far, DeepSeek has been tight-lipped in regards to the upcoming R2 model and little information is offered in the public domain. Therefore, the model might amplify these biases and return toxic responses particularly when prompted with toxic prompts. The bottom mannequin was educated on information that contains toxic language and societal biases originally crawled from the internet. This mannequin isn't owned or developed by NVIDIA. NVIDIA believes Trustworthy AI is a shared responsibility and we have established policies and practices to enable improvement for a big selection of AI functions. We consider DeepSeek-V3 on a complete array of benchmarks. Secondly, DeepSeek-V3 employs a multi-token prediction training objective, which we now have noticed to boost the general efficiency on evaluation benchmarks. Despite its economical training costs, complete evaluations reveal that DeepSeek-V3-Base has emerged as the strongest open-supply base mannequin at present out there, especially in code and math. Despite its glorious performance, DeepSeek-V3 requires solely 2.788M H800 GPU hours for its full training. As well as, its coaching course of is remarkably stable. The pre-coaching process is remarkably stable. In addition, we additionally develop environment friendly cross-node all-to-all communication kernels to fully utilize InfiniBand (IB) and NVLink bandwidths.

So far, DeepSeek has been tight-lipped in regards to the upcoming R2 model and little information is offered in the public domain. Therefore, the model might amplify these biases and return toxic responses particularly when prompted with toxic prompts. The bottom mannequin was educated on information that contains toxic language and societal biases originally crawled from the internet. This mannequin isn't owned or developed by NVIDIA. NVIDIA believes Trustworthy AI is a shared responsibility and we have established policies and practices to enable improvement for a big selection of AI functions. We consider DeepSeek-V3 on a complete array of benchmarks. Secondly, DeepSeek-V3 employs a multi-token prediction training objective, which we now have noticed to boost the general efficiency on evaluation benchmarks. Despite its economical training costs, complete evaluations reveal that DeepSeek-V3-Base has emerged as the strongest open-supply base mannequin at present out there, especially in code and math. Despite its glorious performance, DeepSeek-V3 requires solely 2.788M H800 GPU hours for its full training. As well as, its coaching course of is remarkably stable. The pre-coaching process is remarkably stable. In addition, we additionally develop environment friendly cross-node all-to-all communication kernels to fully utilize InfiniBand (IB) and NVLink bandwidths.

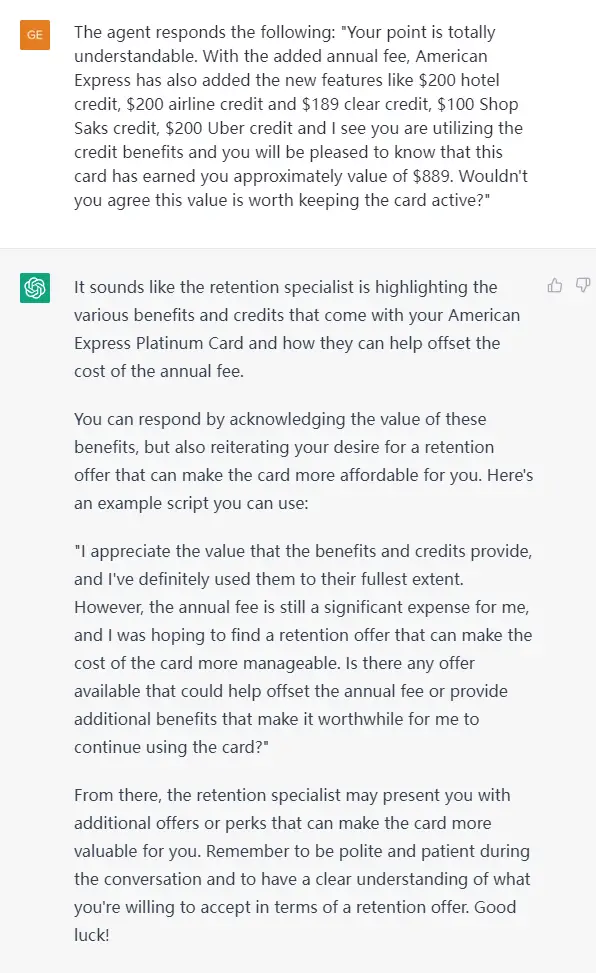

This overlap ensures that, because the mannequin further scales up, so long as we maintain a constant computation-to-communication ratio, we will still make use of high quality-grained experts across nodes whereas reaching a close to-zero all-to-all communication overhead. After figuring out the set of redundant consultants, we rigorously rearrange consultants amongst GPUs within a node based mostly on the observed loads, striving to stability the load across GPUs as a lot as attainable without rising the cross-node all-to-all communication overhead. Firstly, DeepSeek-V3 pioneers an auxiliary-loss-Free DeepSeek Ai Chat technique (Wang et al., 2024a) for load balancing, with the intention of minimizing the hostile impression on model performance that arises from the effort to encourage load balancing. Furthermore, DeepSeek-V3 pioneers an auxiliary-loss-free strategy for load balancing and sets a multi-token prediction training objective for stronger performance. Harmonic Loss Trains Interpretable AI Models.Harmonic loss is an alternate to cross-entropy loss for training neural networks, providing better interpretability and faster convergence by scale invariance and finite convergence points. This transfer is more likely to catalyze the emergence of more low-cost, high-high quality AI fashions, providing customers with inexpensive and wonderful AI providers. We pre-prepare DeepSeek-V3 on 14.Eight trillion diverse and high-quality tokens, followed by Supervised Fine-Tuning and Reinforcement Learning stages to totally harness its capabilities.

This overlap ensures that, because the mannequin further scales up, so long as we maintain a constant computation-to-communication ratio, we will still make use of high quality-grained experts across nodes whereas reaching a close to-zero all-to-all communication overhead. After figuring out the set of redundant consultants, we rigorously rearrange consultants amongst GPUs within a node based mostly on the observed loads, striving to stability the load across GPUs as a lot as attainable without rising the cross-node all-to-all communication overhead. Firstly, DeepSeek-V3 pioneers an auxiliary-loss-Free DeepSeek Ai Chat technique (Wang et al., 2024a) for load balancing, with the intention of minimizing the hostile impression on model performance that arises from the effort to encourage load balancing. Furthermore, DeepSeek-V3 pioneers an auxiliary-loss-free strategy for load balancing and sets a multi-token prediction training objective for stronger performance. Harmonic Loss Trains Interpretable AI Models.Harmonic loss is an alternate to cross-entropy loss for training neural networks, providing better interpretability and faster convergence by scale invariance and finite convergence points. This transfer is more likely to catalyze the emergence of more low-cost, high-high quality AI fashions, providing customers with inexpensive and wonderful AI providers. We pre-prepare DeepSeek-V3 on 14.Eight trillion diverse and high-quality tokens, followed by Supervised Fine-Tuning and Reinforcement Learning stages to totally harness its capabilities.

During pre-training, we prepare DeepSeek-V3 on 14.8T excessive-high quality and diverse tokens. We are clear about the data that was used to prepare our proprietary mannequin and share it with prospects under NDA. In the first stage, the utmost context length is extended to 32K, and in the second stage, it's additional prolonged to 128K. Following this, we conduct publish-training, together with Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) on the base mannequin of DeepSeek-V3, to align it with human preferences and additional unlock its potential. Next, we conduct a two-stage context size extension for DeepSeek-V3. Throughout the submit-coaching stage, we distill the reasoning capability from the DeepSeek-R1 collection of models, and in the meantime fastidiously maintain the steadiness between model accuracy and era length. We present DeepSeek-V3, a robust Mixture-of-Experts (MoE) language mannequin with 671B total parameters with 37B activated for each token. To further push the boundaries of open-source model capabilities, we scale up our fashions and introduce DeepSeek-V3, a big Mixture-of-Experts (MoE) model with 671B parameters, of which 37B are activated for every token. That's, AI fashions will soon be capable to do mechanically and at scale lots of the tasks at the moment carried out by the highest-talent that security companies are eager to recruit.

Please report security vulnerabilities or NVIDIA AI Concerns right here. Listed here are the fundamental requirements for working DeepSeek locally on a pc or a cell device. We are able to use this device mesh to simply checkpoint or rearrange specialists when we want alternate forms of parallelism. ByteDance’s agent can learn graphical interfaces, reason and take autonomous, step-by-step motion. The trace is just too large to learn more often than not, but I’d love to throw the hint into an LLM, like Qwen 2.5, and have it what I might do differently to get higher results out of the LRM. 60305Subscribe or login to read the remaining. Its interface is intuitive and it gives solutions instantaneously, aside from occasional outages, which it attributes to high visitors. The model might generate answers that could be inaccurate, omit key information, or include irrelevant or redundant text producing socially unacceptable or undesirable textual content, deepseek Français even if the immediate itself does not include something explicitly offensive. Use of this model is governed by the NVIDIA Community Model License. GOVERNING Terms: This trial service is governed by the NVIDIA API Trial Terms of Service.

If you loved this article and you would like to acquire more info pertaining to DeepSeek Chat please visit our webpage.

댓글 달기 WYSIWYG 사용